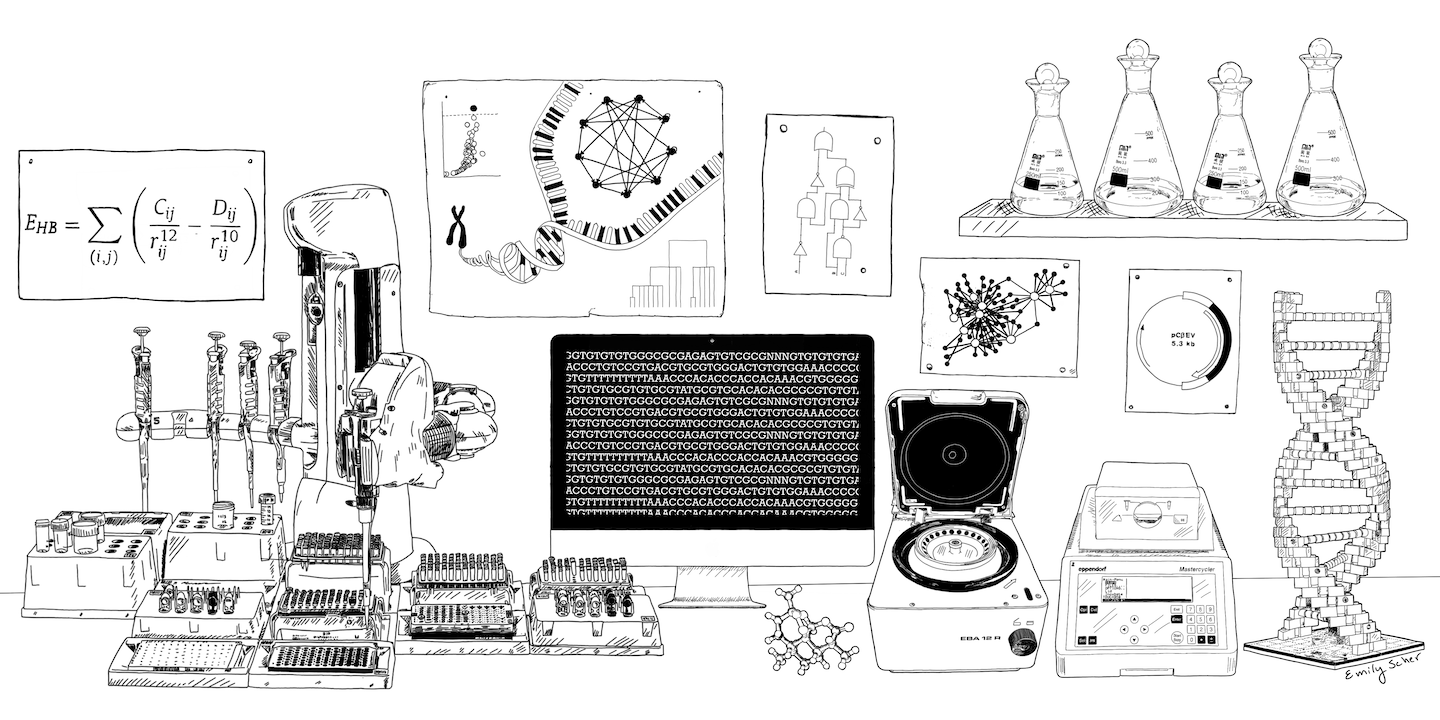

We are a multi-disciplinary research group based at the School of Biological Sciences at the University of Edinburgh interested in understanding the molecular mechanisms underpinning complex phenotypes and diseases using two of the most disruptive technologies of the last 20 years: AI and synthetic biology. Our long term goal is to reverse-engineer biological systems to create generative AI models to design, build and test biological agents for addressing healthcare and industrial biotechnology problems.

Our group is currently focusing on developing new biologics for the treatment of a class of rare diseases known as Lysosomal Storage Disease (LSDs), by developing new AI-driven biologics engineering methods and by engineering microbial and mammalian cells for optimising biologics production.